March 30, 2004

Bodies in Motion

Bodies in Motion

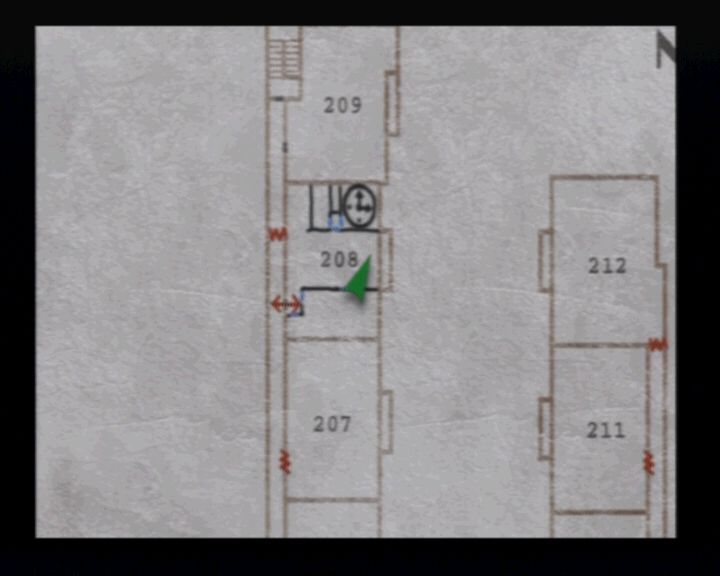

by peterbLately I've been playing a bit of the squad-level strategy game Jagged Alliance 2 (both the original and the new "harder than killing a puppy in cold blood without guilt" Wildfire version.) I generally prefer turn-based strategy to real time strategy games, but thinking about some of the design decisions the JA2 guys made sparked some thoughts about the fundamental choices designers make when building these games.

The fundamental choice, it seems to me, is whether your pieces move simultaneously or in turn. This is completely orthogonal to the issue of "real time" versus "turn based":

| Turn-based | Real time | |

|---|---|---|

| Single move | "Move your piece" Jagged Alliance X-Com Gladius Fallout Tactics | (none?) |

| Simultaneous | "Plotted movement" Eastern Front Combat Mission TacOps Galactic Gladiators | "Clicky-Clicky" Close Combat Baldur's Gate / Neverwinter Nights Warcraft Age of Empires Starfleet Command 2 |

As you can see, it's hard to classify some of these games, and the more you think about it the harder it gets. For ease of simplicity, I'm explicitly declaring that games that allow you to pause the action are not invalidated from being considered "real time". All computer combat games are turn-based in some internal implementation sense (e.g., in Warcraft your grunt will get a swing every X quanta of time). In my taxonomy, a game is "turn based" if those quanta are explicitly exposed to the user as "Now is the time when you must enter your orders, or your men will stand around, slackjawed, looking rather like 3D-rendered apes."

AI, in other words, can turn a turn-based game into a real-time one. Neverwinter Nights implements D&D style turns and rounds, but if your avatar is attacked he or she will at least have some default "hit the monster back" type behavior. Likewise, Starfleet Command 2 is an almost direct implementation of the completely turn-based system from the Star Fleet Battles board game, but successfully hides its turn based nature from the player.

So for me the vertical axis is the more interesting one -- simultaneity of movement. "Single move" means that in the context of the game, either the player is moving or shooting, or the enemy is, but not both at the same time. "Simultaneous" means that the enemy can be on the move at the same time as your pieces are.

I find it interesting that most of the squad level games are single move. The compromise that most of them seem to have adopted is "only one piece moves at a time, but if the moving soldier moves into the field of view of an enemy who has movement points left, the enemy may get an attack of opportunity." I've never played Advanced Squad Leader, but I have a suspicion that that is exactly how it resolves combat. Any ASL experts want to enlighten me?

The battalion and division level games are more sophisticated; from as early on as Chris Crawford's Eastern Front, these games generally allow you to input orders for all your units, and then tell the computer "go." A notable exception is the Panzer General series of games, which essentially acts like the squad-level games in my list.

I don't think it's coincidental that Panzer General is universally viewed as a more approachable, mainstream game than (say) Combat Mission. "One piece moves at a time" semantics aren't just easier for developers to implement, they are also arguably easier for players to understand. Somewhere deep in the darkest recesses of our medulla oblongata, "go over there and whack the other guy with a stick" is a concept we all understand.This is an intrinsic advantage of "move your piece" over "plotted movement" type games from a UI perspective: you click the mouse button and something happens. A piece moves. A little animation of a gunshot or an explosion plays. You whack the other guy with the stick. In a plotted movement game, the most that can happen is the computer can play a little happy sound that means "Yes, orders received." UI matters. This is a big hurdle for plotted movement games to overcome.

Scale is another issue that may make it harder to implement simultaneous movement in a squad level turn based game. In a game like Combat Mission, the distance troops need to cover is large enough that they're generally not going to fly past each other by accident. A carelessly implemented squad level game could result in multiple rounds of combat with antagonists running past each other and then pirouetting around to fire off a shot.

Yet if we're honest, the "move your piece" games suffer from their own class of problems: believability. This is less of an issue in the fantasy games, but it does stretch credulity in, say, Jagged Alliance when one of my men has enough action points to saunter up to an enemy and calmly fire a Desert Eagle into his face, and the enemy just stands there for the entire 10 second period because he had higher initiative and therefore has already moved and used up all of his action points. So even though plotted movement games have usability hurdles to overcome, I think it's worth trying to overcome them, because they bring greater realism -- of a sort -- to the player. Clicky-clicky games bring that realism also, but at the cost of requiring more dexterity and -- potentially -- less strategic thought (at least, that's the argument. I'm not actually convinced that this is necessarily true, but I'll put it forward for consideration anyway).

Thus, I'd love to see more squad level games where combat is resolved simultaneously. I know of two, neither of which are modern. Both SSI games, Computer Ambush was a "squad leader" type game with a World War II mise-en-scene, and Galactic Gladiators (and its sequel Galactic Adventures) were based in the 28th century. Computer Ambush's user interface was terrible (for instance, to move, you told a soldier "MRdnm" where "d" was the direction, "n" was the number of squares, and "m" was the "mode" in which you were moving.) The Galactic games, to the contrary, stand up well even against today's best squad combat games. The interface is good for their era, and combat is quick and brutal.Here are some rules of thumb that I think one could use to make plotted movement games work as well as "move your piece" games:

- Rounds need to be short. The more orders you have to keep in your head before hitting the "go" button, the more confusing the experience will be to the player.

- The UI should encourage players to issue orders to squad members in terms of objectives and tactics, rather than in terms of controlling every step they take. It should be feasible to instruct a squad member "Get to this location on the map, moving cautiously and keeping under cover whenever possible" and not have them run out into the middle of a football field. Corrollary: A good pathfinding algorithm is a requirement. Corrollary: The interface needs to allow for a unit to report in for more orders if they get stuck or can't complete their objective.

- Likewise, instead of ordering "shoot at target X now," firing orders should be objective based: shoot indiscriminately to provide covering fire, only try to take out this target when you have a good shot, fire at anyone who approaches this area who you have a clear shot at.

- You should be able to issue orders to multiple units as a single squad ("Move to this objective cautiously. Leapfrog and cover each other as you go.")

Or, instead of developing rules of thumb like this, maybe I should just see if I can find someone to fund me to produce Galactic Gladiators 2004. I see maybe a Bruce Willis in the main role, with Julia Roberts as the love interest. It'll have lots of heart. And a happy ending. I promise.

Additional Resources

If you enjoyed this article, you might enjoy some of these links, too:

- A narrative history of Chris Crawford's Eastern Front. Crawford also has some interesting discussion of this game in his most recent book.

- An ancient review of Computer Ambush.

- Some interesting commentary from the author of Galactic Gladiators and Adventures. ("I stopped making games for two reasons. I was going broke. And I was beginning to dream in 6502 assembler.")

March 29, 2004

350 Miles for a Hot Dog

350 Miles for a Hot Dog

by peterbI arrived in Toronto at about 2 in the morning, and the very first thing I did, after parking the car and checking in to the hotel, was to walk down Yonge Street to the nearest street vendor and buy a sausage, slather pickled peppers and mustard and kraut on it, and walk back to the hotel, eating my hot dog, victoriously. Hot dogs taste better in Yankee stadium, or on Yonge street. No one knows why. It's just that way.

I love Pittsburgh, but one of its biggest drawbacks is the near-absolute lack of street cart or vendor culture. The city council doesn't just not support it, they actively oppose granting new licenses (and then they write whiny, stupid articles in local papers wondering "Why don't more people move here?") Oh sure, there's the Saigon Sandwich lady on the strip, who makes the best Saigon sandwich I've ever had, anywhere, and there's Dilly and her ribs, but they're in the Strip District where there's already a ton of great food. Half the joy of a street vendor is that you can find them in neighborhoods where there's nothing but overpriced restaurants and banks. Hand them $2, walk away with the best meal you'll have all day. Other than the strip ventures, there's a few truck-based places that operate near the University campuses, and one Asian cart on the South Side. That's pretty much it.

Another aspect of Toronto street food that I love: everyone eats it. When I lived in New York, you could pretty much divide people -- on sight -- into those that will eat a hot dog from a cart and those that won't (there was also a third category, which was "I would eat a hot dog from a cart, but I decided to go to Nathan's in Coney Island, instead.") In Toronto, dividing people up this way isn't possible, because they don't let you into the city if you don't eat street vendor hot dogs (all of the vendors sell vegetarian sausages, too, so you're out of excuses). Every time I go, I see someone I wouldn't expect to be chomping down on an Italian sausage in public wolfing one down. Last trip, it was the 60-something asian grandmother, squatting on the curb (onions, peppers, mustard). This time, a Muslim man and his wife (in a chador!) walking down the street eating their very delicious looking beef dogs (I presume), which were covered in chilli.

It was a long drive. But it was a really good hot dog.

More culinary notes from Toronto will follow soon.

March 26, 2004

Blame Canada!

Blame Canada!

by peterbI'll be in Toronto this weekend, eating good food and visiting good bookstores; probably no updates until Monday.

March 24, 2004

Got (raw) milk?

Got (raw) milk?

by peterbNote: I originally published this article at Tea Leaves.

For a long time I've been fascinated by the idea of being able to buy and drink raw milk (or as some would have it, "real milk") rather than the pasteurized and homogenized product we all know and love. Part of it is the (realistic) fantasy of being able to make real clotted cream and part is the (unrealistic) vision of myself living in the Dordogne making an earthy, runny cheese from lait cru, which I bring to market each week. After the market, I would gather with my fellow peasant workers of the terroir and we'd sit and quietly get drunk on cheap red wine and complain about stupid Americans and the constantly striking truck drivers.

I always assumed that I'd never get the chance to make either of these fantasies come true, but thanks to a well-placed word, I now have a gallon of raw milk. Time to get to work.

Mystery surrounds raw milk. I had always assumed that it was totally illegal to sell it, everywhere in the US. It turns out this isn't true. Despite it being technically legal in my state, I quickly discovered that since everyone (like me) thinks it's illegal, trying to get someone to sell you some in any sort of retail setting is difficult to impossible. It was on a tour of a cheesemaking facility north of Pittsburgh that I got my first useful clue -- we saw how the farmers delivered raw milk, which the cheesemaker then separated the cream out of, and pasteurized. I asked the owner how I could get some raw milk. Looking at me strangely, he said "I guess you should find a farmer."

The only problem was, I didn't know any farmers.

At an Easter dinner, I got into a conversation about cooking and baking; I'd been making a lot of crème fraîche lately, and I let slip my yearning for raw milk to play with, and that I was giving up on ever getting any, since I didn't feel comfortable driving up to some farmer I didn't know and asking him if I could fondle his milk cow's teats. One of the people dining with us said she knew a dairy farmer, and offered to introduce me. I leapt at the offer with enthusiasm. I got a call a few days later setting up a time and place -- "It depends on the milking schedule, see." I was already liking this more and more.

Bob and Tomalee's farm is nestled in Somerset County under the shadow of giant, elegant, and intimidating windmills. Tomalee is short, voluble, and hugs strangers, all the while accompanied by an entourage of placid dogs and scrawny farm cats. Bob's face is cheerful and red, and he clearly relishes playing straight man to Tomalee's trickster. Tomalee seems puzzled by my need for raw milk ("Clotted cream? Never heard of it.") but is thrilled for the chance to show off her menagerie ("my friends") to my friends and me. Suppressing my urge to say "Yeah, yeah, lady, just hand over the loot!" I take the tour. And what a tour.

Tomalee's friends are many and varied. Apart from the milk and meat cows, which don't "count," there are of course the pet cows. Which are like the other cows, only cuter. Or something. But my first indication that something is truly different about this farm are the hens. They have little antennae. "Are those peahens?" I say. "Yep!" says Tommalee. And there, in the corner, is a peacock, with a five foot tail. "Did you know that the peacock is a tropical jungle animal?" she asks. "I learned that in science class." "Huh," I say, resisting the temptation to ask her "So what the hell is this one doing in Western Pennsylvania?" Next to the Peacock are the rabbit cages, which are next to the pen with the goats and a single (still unsheared) ram.

On a pile of hay near some of the pet cows a black farm cat is sleeping, near some piled up blankets. Then the blankets move. They're not blankets -- they're a pot bellied pig that has grown to the size of a small washing machine, looking disturbingly like Old Major from the animated version of Animal Farm. "That's Hunter!" says Tomalee. She walks over to Hunter and unleashes a torrent of high pitched baby talk. Tomalee explains that Hunter is real lazy. We walk down an aisle of about 8 "pet" cows. "We don't eat friends," says Tomalee, and I nod. "Never eat anything you've been introduced to, like in Alice in Wonderland." She looks at me funny again, and shakes it off. There's also a horse. I confide that I was scared of cows when I was younger, because they seemed so huge. Tomalee says she's scared of horses, when they're not in a pen, for the same reason.

There are about 50 milk cows in the barn, standing, sitting, eating, pissing (the first time I hear this, I start looking around for the firehose) and generally waiting to be milked. Each animal has a tag on its ear with a number. Above each stall is a sheet of paper with the name of the cow written on it ("Debra", "Marcy", "Vanna"...) and notes on what type of feed it gets -- grass, corn, soy. I've been absentmindedly petting the cows as we walk along. They seem to like being scritched on the sides of their face; several enjoy licking my arm. On my second time trying to pet Debra between her eyes, she lifts her head up, and slides it roughly down the side of my arm, turning it, pushing my arm away roughly.

I have just been brushed off by a cow.

Bob is talking to one of my friends about using Bovine Growth Hormone (BGH) to increase milk production, and how he doesn't use it because he thinks it overworks the cows. These people wouldn't think to market their products as organic, yet they have an intrinsic distrust of Monsanto corporation. It's like I'm in heaven. I am salivating at the prospect of trying their milk.

Yellow Golden Pheasant

I promise Tomalee I'll bring some clotted cream with me when I visit again. She says she looks forward to finding out what it is.

The milk itself, when I finally try it, has a musty, almost cheeselike taste, with just a hint of a sulfurous tone. The cream does indeed rise to the top, although the clotted cream didn't turn out that well, the crème fraîche was awesome. With spring just around the corner, I'm looking forward to going back to visit Tomalee and her friends. I think Debra the cow and I have some unfinished business.

Additional Resources

These links might be of interest to you:

- The Campaign for Real Milk maintains a summary of laws about raw milk around the United States.

- The US Department of Agriculture sucks for not allowing us to buy, sell, and eat yummy raw milk cheese.

- The milk you drink might contain Bovine Growth Hormone. Sorry.

- The deceptive advocacy group PCRM is trying to convince people that milk is poison. Here's hoping I'm never invited to any of their dinner parties

March 23, 2004

Middle East^H^Hrth

Middle East^H^Hrth

by peterb

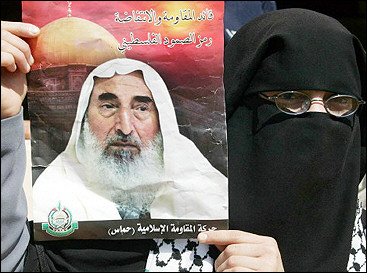

Lebanese mourn death of Saruman, Wizard of Isengard

March 22, 2004

Two Overpriced Ports

Two Overpriced Ports

by peterbBuying vintage port is like going on a blind date in Manhattan. No matter how many close friends vouch for your blind date, you really can't know in advance whether it will be fun or a disaster, and the only thing you know for sure is that it's going to cost a lot of money.

You can't even count on having a good time if the date isn't completely blind. Since vintage port is a wine that we often keep for years, it's not unusual to end up in a situation where one bottle of a given house and vintage is superb, and then the next bottle from the exact same batch is awful, because it has spoiled, because you didn't rebottle it; this happened to me with a bottle of 1977 Smith-Woodhouse. I'm still recovering from the trauma. There are people who rebottle their vintage port periodically to avoid this outcome. I don't personally know any. (Pet peeve: if the wine industry would just get over itself and admit that "real" corks are completely inadequate to the job they're being asked to do, and move to some less stupid technology such as a bottlecap and wax seal, this wouldn't be an issue.)

So vintage Port, because of value issues, remains for me a rare luxury. Most of the Port I drink, when I drink Port, is just straightforward nonvintage ruby Port; I'm fond of Cálem, but the variance in quality between all of the great Oporto houses in this segment of the market is very small. It's hard to go too far wrong. Occasionally I'll grab an unassuming bottle of cheap white Port, when I can find it, which isn't often. That's a little riskier (and many people don't enjoy white Port). I dislike tawny Port intensely; i'd rather drink Sherry.

Ruby Port is a fine drink. Typically it will run you around $10 to $15 a bottle in the US, a little less overseas. The houses in Oporto, sensing an under-served market ("I want to be snobby about my wine, but I don't want to pay for it.") have developed a class of wines in recent years referred to, broadly, as "Late Bottled Vintage" In one of those amusing false cognates, "Late Bottled Vintage" is Portuguese for "not vintage." Like vintage wines, LBVs come from a single year's harvest, but spend much longer in oak casks compared to true vintage Ports, and much less time maturing in the bottle. This forces some complexity into the wine, at the cost of subtlety. They're ready to drink earlier, although they will generally have a lighter body. A typical LBV will run you between $25 and $40.

Late Bottled Vintages have been a thundering success for the houses of Oporto. I believe this isn't so much wannabe wine snobbery as a genuine desire to drink good Port, and drink it now: the only vintage Ports most of us can reasonably afford are the truly young ones. Buying a case of young vintage Port is a risky endeavor, because you don't know how it's going to turn out, and drinking a bottle of vintage Port before it's matured feels like an ethical violation, at least to me. "Infanticide" is the word bandied about by Portophiles. Late Bottled Vintages are an understandable compromise.

The houses, emboldened by the success of the Late Bottled Vintage marketing experiment, have now developed a new product to capture the dollars lying between the $10ish Ruby price point and the $30ish LBV price point. These wines are referred to as "reserve" Ports. "Reserve" has a long and hoary tradition in the wine industry outside of the niche Port market. Roughly, it translates to "this wine isn't any better than our standard wine, but I somehow think I can convince you to pay more for it." On very rare occasions, you'll find a reserve that is actually noticeably superior to its undesignated cousins. But less often than you would hope.

I succumbed to the marketing this week, mostly because my local wine store (I live in Pennsylvania, where human life is cheap and the State controls all liquor sales with an iron fist) didn't carry an adequate selection of ruby Port. I decided to experiment, and picked up two "reserve" class Ports: Graham's Six Grapes (on sale at $19/bottle) and Sandeman's Founder's Reserve ($18). As usual, we tasted the Ports with neutral crackers and a selection of good blue cheeses (Port and Stilton, Roquefort, or Cabrales is truly an incomparable paring).

Six Grapes

The Sandemans Founder's Reserve was acceptable, although, arguably, still overpriced. Much sweeter than the Six Grapes, it managed to stay just under cloying. It did this mostly by having an interesting middle -- there's a sour note that was equal parts offputting and intriguing, a bit like the hint of sour milk in Hershey's chocolate. It lacked the attenuated wino-on-Saturday-night attack (and sustain, and release) of the Graham's. The tail was noticeable if not outrageous. I initially thought the nose was a bit on the light side, but that was because I was comparing it to the beaker of rubbing alcohol that was the Six Grapes; when I tasted it again in isolation it was fruity, with almost a bright citrus or sangria aroma.

My foray into the world of "reserve" Ports has, simply, reconfirmed my prejudices: from now on, when I'm not willing to open one of my vintage Ports, I'm going to stick with a standard ruby Port from a house that I know and love. I'm sure there must be some reserves out there that might be worth the premium, but I'm not inclined to experiment much more with this particularly marketing label.

Additional Resources

If you enjoyed this review, the following links may be of interest to you.

- The Wine Spectator's Port basics page is a good introduction to what Port is and how it is made.

- My favorite house is Cálem. Visit them if you're ever in Oporto.

- Almost as important as finding the right wine is finding the right cheese.

- Daniel Rogov has reviewed hundreds of Ports. He hates Six Grapes too.

Digital Picture Workflow

Digital Picture Workflow

by psuOne of the digital photography web sites recently published an article on how Sports Illustrated manages its digital photographs. The piece described the process of shooting and editing 16,000 pictures during the Super Bowl. After reading it, I realized that the workflow that I've come up with for managing my own personal digital pictures is similar to SI.

Workflow is a word that gets tossed around a lot when referring to the management of digital pictures. But, there is nothing really new going on here. Even in the film days, we all had a workflow. To wit:

1. Shoot the film. Organize the shot film into buckets.

2. Process the film buckets. I always used Tri-X and D76 1:1.

3. When the film was dry, cut it into strips, but the strips into storage containers. Organize the storage sheets somehow.

4. Make contact sheets. Pick the frames you like. Label and organize the contact sheets to relate them to the stored film.

5. Make proofs of the good frames, pick the ones you want to print well. Label the proofs and final prints so you can find the negative when you need to make others.

And so on.

The idea here is to be systematic about how the pictures are processed and stored so that you find the pictures you need later.

It turns out you need to do exactly the same sort of thing with digital pictures. The medium makes some of this easier and some more tedious. But, it's important to have a structured and repeatable process, since you don't to be losing that one file with a favorite picture in it.

The Big Picture

I shoot with 2 cameras, a small point and shoot that makes JPEG files and a bigger digital SLR that can make JPEG files, but also allows me to store the pictures in a "RAW" format. The following is what I do with the pictures from shooting to final print.

1. With the SLR, I shoot RAW files only, except when I know I might need to shoot more than 100 pictures before downloading. I have a single 1GB flash card, which fits 100 RAW pictures. With the P&S, I shoot JPEG.

2. Every once in a while, I take the card out of the camera and stick it in a card reader. I use iView Media Pro to download and catalog the initial pictures. All pictures for a given year are downloaded to a single folder on my laptop called "2004".

3. I use iView to rename the picture files to be unique by adding on the capture date. iView automatically keeps track of this date for me, so it's handy.

4. For the RAW pictures, I convert each picture from RAW to a small proofing JPEG file using a batch script and Adobe Camera RAW inside photoshop. For JPEG files, I also make a small proofing JPEG, just to be consistent. All the JPEG proofs go to a different folder called "2004-JPEG". The JPEG files are tagged with an sRGB color profile. More on this later.

5. I browse the JPEG proofs to pick pictures I like.

6. The good ones get reconverted and then turned into files for printing or for display on the web. I have a script that generates the albums on the web site. These albums are driven off another collection of JPEG files that are completely separate from the main catalog. That way if I need to back up the web site it's easy. Also, the image file names on the site match the ones in my catalog so the RAW file or JPEG that the picture came from are easy to find. iView also lets me tag pictures with keywords and such to make them easier to find.

7. All RAW and original JPEGs are backed up to 3 external hard drives stored in two places. I keep the originals online on my laptop for a year, and then move them offline and just keep them on the backup drives.

For prints, I have to come up with a way to organize the print files and attach them to the originals. I don't make many prints, so I haven't worked this out yet.

The Rationale

I came to this particular workflow after about a year of fussing around with a few different schemes. So here is the rationale for the steps I take above.

1. iView is simply the fastest and most straightforward cataloging program I've found. It doesn't have server features, but I don't need that. It reads EXIF keywords, keeps track of color profiles correctly, and so on. It also automaticlaly lets me browse the archives by date, keyword, and other queries. Very nice.

2. JPEG proofs turn out to be very handy to have for multiple reasons. First, browsing a ton of small jpegs to find the good ones is a lot faster and easier than browsing RAW files or larger JPEG files. My wfe likes to do this using the slide show feature in iView, and this is only really usable with smaller JPEGs. Second, the small JPEGs make a good base image for the web albums, so I'd be making them anyway. Finally, having the small JPEGs lets me carry around my whole picture archive on my laptop. The laptop disk isn't really big enough to hold more than a single year of RAW pictures, but I can hold many years of JPEGs and still have enough room to keep my RAW files for the current year online.

3. I use hard disks for backup because burning CDs or DVDs just takes too much time and is no more archival. My plan is to just buy a big firewire drive every year and cycle the entire archive to it. For the forseeable future, disks will keep getting bigger faster than I can shoot more pictures.

4. The only manipulation I do with the image file names is to tag them with the capture date of the picture. Putting any more meta-data into the filename is problematic in various ways. Better to have it in the iView catalog. It's also handy to know that your file names are always unique so you can use them as database keys. The main thing to do is to make sure you use the same filename for the same picture everywhere the picture appears.

5. I like shooting RAW files on the SLR because it's really easy to post-process the things to fix things like color balance under mixed lighting and general underexposure if I or the camera messed up. Doing this with JPEG files is trickier.

Someone asked me how I keep the two catalogs I make in sync. The answer is that I don't, really. The JPEG catalog is for proofing only and I only use it to navigate to the actual NEF files in the main catalog.

But, most of the meta-data (dates, keywords, labels, etc) that I might attach to a file gets put into the EXIF part of the file which is preserved when I convert from NEF to JPEG. If I change a lot of this info, all I have to do to sync the catalogs is reconvert the NEF files and then rebuild the JPEG catalog based on the new information.

Oh, so for reference, what I do that is similar to SI is:

1. Keep both JPEG and RAW around.

2. Proof using JPEG

3. Reconvert the RAW file and process for printing.

4. Use a good fast catloging program for meta-data.

My final thoughts are a quick meditation on color profiles.

Why sRGB?

Color profiling and color spaces are a large and confusing topic. Rather than get into a long and abstract discussion about color spaces, device profiles, gamut and so on, I'll outline my reason for using sRGB for everything via a simple problem that I was trying to solve:

I use a Mac. My wife uses a PC. I needed my web pictures to look at least similar on the two machines.

It turns out that the easiest way to do this is to always convert pictures into the sRGB color space, and make sure that the JPEG files on the web site are correctly tagged with this color space. Why should this be?

Historically, Macs and PCs have displayed images very differently. Apple's default display setups use a gamma of 1.8 and some pre-defined color balance. PCs use a gamma of 2.2 and some other predefined color balance. It turns out that sRGB is a color space that is defined to model a sort of generic PC display. What does this mean?

Basically what it means is that for every (r,g,b) value in the image file, the display software will look at the sRGB profile and transform the numbers into a new (r,g,b) so that the whole image displays with an overall gamma of 2.2 and a certain color balance. The exact transformation that takes place depends on the display device. On a Mac, the transformation makes everything a bit darker and more contrasty. On the PC, the transform does almost nothing.

But, the overall result is that if I look at the picture on my Mac with software that respects the color profile, it will look similar to what my wife sees on her PC. Happily, most of the display software on the Mac does the right thing because it all hooks into Colorsync. Earlier versions of Mac OS X didn't have this universality, so if you look at my pictures in Safari under Jaguar, they will look too bright and washed out.

In digital photography circles, sRGB is scorned as a poor least common denominator color space. You'll hear a lot of complaints about lack of color gamut for example. This all might be true, but for me, sRGB makes perfect sense because:

1. I'm mostly concerned with web display, and this makes sure that the pictures look right even on PCs that don't have color management.

2. Most inkjet printers and digital minilab machines do automatic color balance based on an assusmption that you are using sRGB. Sticking with this saves me an extra calibration headache. I haven't noticed that I'm losing a lot in sRGB. But if I did, I'd just redo the job in another color space if I had to.

3. Most point and shoot cameras implicitly capture in sRGB and tag their JPEGs as such, so I don't have to do anything special.

So there you have it. Maybe another time we'll talk about color calibration some more. Except it gives me a heacache.

March 20, 2004

Metamechanics

Metamechanics

by peterbGame mechanics: the underlying rules and goals of a game. How do you decide what a player is allowed to do? When has a player won? How do player actions affect the game? The mechanics of a game are part of a game that is not narrative.

Some basic game mechanics:

- Run around in a circle; first one to finish wins. (all racing games)

- Kill everyone else and/or capture the flag (most FPSs)

- Move the ball to the scoring zone the most times (sports games)

- Capture and hold victory points (war games)

- Wager and win tokens (gambling games)

Compound games exist: Get the ball in the goal while killing everyone (Deathrow). Run around in a circle while killing everyone (Wipeout XL, Quantum Redshift). There are plenty more. What I've been thinking about today is not so much mechanics in the sense of the specific ways games play, as in these examples, but in what I think of as "meta-mechanics," the classes that the rules themselves fall into. For example...

Computer Ambush

A similar decision is whether there is a role for random chance in a game. A game such as chess, checkers, or go is a "pure" game; the outcome is determined entirely by where the pieces are placed. On the other side of the spectrum would be Candyland, where the player actually has no control whatsoever over his move, and just obeys the random chance of the card he pulled from the stack. Many in-between shades exist, from The Game of Life (where you get to make essentially one decision: "Go to college" or "Don't go to college") to Monopoly (where in the early part of the game you can decide whether to purchase or not purchase each property you randomly land on) to The Settlers of Catan where you make early wagers on probability and make non-random moves which will provide payouts later (or not) based on a die roll. There is a school of ludological snobbery that says that "pure" games that have no randomness -- such as chess, go, and checkers -- are inherently superior to games with a random element. The logic here seems to go something like: games are a competition, and if I can't prove my superiority every time by applying the power of my gigantic brain then the game sucks. I think that's clearly wrong -- randomness can have a place in games, just like in life. De gustibus non disputandum est.

It is an increasingly common trope in videogames to let the player substitute dexterity for randomness. For example, in Gladius, a well-designed tactical combat game, the player moves his pieces around an arena and chooses from a variety of attacks. There's an option to have the attack's success or failure be determined by a die roll (which would be the likely method of resolution if Gladius were a board game), but the default mode is io display a "skill bar." The game requires the player to press buttons on the controller in a certain pattern or with a certain rhythm. If the player completely muffs it, the piece she is controlling misses its opponent. If the player gets it right, the piece hits. If the player gets the rhythm just so, her piece performs a "critical hit" for extra damage. Many of the execrable Final Fantasy games use a variant on this system as well.

Robotron 2084

What other metamechanics can we identify? Why do some "strategy" gamers so vehemently prefer randomness to dexterity, when that randomness is only a part of the genre because doing more sophisticated modeling in a boardgame context is ponderous? Shouldn't the "roll a die" method of determining an outcome in RPGs and strategy games be outmoded, now that we have hyperintelligent transdimensional computers to do the hard modeling work for us? Is "roll a die" still the preferred method of combat resolution simply because it's easier for developers to implement, and we're all lazy?

Additional Resources

Score points by moving your piece into the target areas below and clicking.

- Eric observes that talking about these things can be hard.

- There are plenty of people that think about this stuff more carefully than I do.

- Everyone knows where to get videogames, but if you have no local board-gaming shop, you can get great board games from Funagain.

- It bothers me that Carabande (and its inferior remake) are so expensive.

March 18, 2004

La Prima Espresso

La Prima Espresso

by peterbIn a wonderful rant, psu talks about the perfect cup of coffee, and how there's only one place in Pittsburgh -- La Prima Espresso -- to get it. His conclusion is that it's pointless to buy an expensive espresso machine like a Silvia because it still won't be as good as what we can get at La Prima.

He's right and wrong. Whether or not getting a fancy espresso machine is "worth it" is of course a judgment call, but I agree that generally what you're going to make for yourself isn't going to be as good as what you get at a good café, if only because of what I like to call the "hot dog in Yankee stadium" effect. You can come really close, but fundamentally no matter how interesting I make my house, it's not likely to have old Italian men smoking cigars and playing Scopa and trying to hit on the cute Italian teacher from Shadyside Academy while people come in and buy fresh pastries from Antonio next door and jostle to make sure that Elio pulls their coffee instead of the annoying kid with the buzz cut.

OK, maybe not everyone likes that ambience. But I do. Hot dogs taste better when you're actually in Yankee stadium.

We have a Silvia at work. You can make great coffee with it. But fundamentally, a cappucino at work doesn't taste as good as one near Campo dei Fiori. There's also the financial angle: a cappucino is $3/cup at la prima, rounding up. That's 200 cups of coffee before you break even on a Silvia / Solis Maestro combination, and that's if we only count equipment and not raw materials. Of course, you may find joy in the process of making the coffee yourself, which is hard to put a price tag on. But if you don't, unless you're drinking many per day, it makes sense to pay for your coffee by the dose instead.

Personal to psu: the lousiest, skankiest train station in Rome makes a better espresso than Café de Flore, although I make no promises as to ambience.

Pete's also right and wrong about Starbucks. He's right that it's a shame that they promote the retarded Seattle style cappucino -- newflash, geniuses, the hood in cappucino is supposed to come from the milk mixing with the foam from the coffee, not from freakishly aerated milk -- but I think he forgets that just 10 years ago if you wanted a cup of coffee on the Pennsylvania Turnpike you pretty much ended up drinking stale Maxwell House that had been cooking for 12 hours. Starbucks' brewed coffee, at least, is better than that.

Synchronicitously, Goob gives some tips on how to make good coffee at home without spending $500 on an espresso machine and $100 on a grinder. His advice is good -- freshness really does trump everything else. For home use, I'm pretty happy with my Bodum vacuum pot, which only ran me about $50 or so.

Additional Resources

Get your fix on:

- La Prima Espresso has a web site. The guy in the picture in the lower right is Elio, who pulls the best coffee.

- psu's coffee rant. Paul's coffee advice.

- One popular coffee fanatic's site is Whole Latte Love

- For window shopping, I like CoffeeGeek

- Too Much Coffee Man is the cartoon for the web-savvy caffeine addict.

- The Rancilio Silvia is a nice machine.

March 17, 2004

Europa Universalis

Europa Universalis

by peterbSince my last article was spent talking about console games and how great they are, let me shift gears and talk about a PC game I've been playing lately: Europa Universalis II.

This is a game with beautiful, beautiful maps. Gorgeous maps. Maps that literally take your breath away and make you marvel at them. You'll sit back, contemplating the intriguing position of the Low Countries in relation to the principality of Brandenburg, and say -- perhaps out loud, even -- "Damn that's one beautiful map." See, I like maps. I love them. And the tragedy of Europa Universalis, for me, is that the game isn't as good as its maps. I keep playing the game, even though I don't like it that much, because I'm convinced that it has to be better than it is. Because of the maps.At first blush EU II looks like a standard "conquer the world" civ-alike, perhaps with a feel akin to Merchant Prince, but it's really more of a history simulator than an actual game. It brings some interesting mechanisms to bear on the conquer-the-world genre that I enjoy on their own, yet which fail to cohere into a playable whole. Let's first take a look at some of these mechanisms by themselves, and about some of the positives of the game, and then talk about the negatives

Time Period

EU covers the period from 1419 through to the early 1800s. There are optional campaigns that cover smaller lengths of time, if you're not up for the full challenge of 400 years modeled a single day at a time. Yes, I said one day at a time. The days fly by, but this is not a game of lightning warfare and swift technological change; the sands of time drip through the hourglass one by one. I've been playing the "France 1492" campaign for perhaps two weeks now, and it's still only 1526. This is not a quick game.

Combat and Conquest

One of the aspects of the game which I intensely disliked when I started playing but have come to admire is how, exactly, conquest works. In your typical civ-alike (or Risk-alike), you march your troops off to some enemy province, dice are rolled, blood is spilled, and when all is said and done, to the victor goes the spoils. That's kind of how it works in EU II. But not really.

First, your invading army has to dispose of any standing troops in the province; that works pretty much like you'd expect. Then, you have to mount a siege. You can expect your average siege to take between 6 months to a year, or longer. Eventually, you'll starve the province into submission and the province is yours. Except it isn't: sure, you might have possession of that province, but if other leaders (and God!) don't recognize your claim to it, you are merely a pretender. No, if you want to claim that province you'll have to earn it the old fashioned way: by sending your best councillors off to negotiate peace, and getting the country you wrested the province from to agree to give it to you (unless the entire country is just a single province, in which case you may simply take it.)

This leads to hours of fun. So let's say you're France, and you want to assert your claim to Bretagne, which is owned by England. Sure, you can go conquer Bretagne, but you'll probably also send some troops to Britain to take over Cornwall and Kent, and beat up on British troops in the field for a year or so, in order to make the British desperate enough that they'll start suing for peace and be willing to part with Bretagne, and perhaps some lucre, too. My personal version of the Hundred Years War Part Deux ended with me in control of half of England, as well as the keys to their lovely colony of Massachussetts. Really, the British are quite tame once you've knocked down Buckingham Palace a few times.Raising a military is an expensive proposition, and you have to worry not only about taxes, but about support, attrition, and the disparity in technology levels between you and your opponent. It is challenging, if a bit unrealistically whack-a-mole like -- defeated armies "retreat" in seemingly random directions, sometimes deeper in to your own territory, so you have 16th century armies engaging in what seems like maneuver warfare, accidentally. It can be frustrating.

Religion

Provinces have religions (and cultures, too). Catholic, Orthodox, Protestant, Sunni, Shia, Buddhist, Hindu...all the major religions are represented (although not Zoroastrianism. Yea, verily, the Wise Lord Ahura Mazda shall punish the developers for their perfidy.) Religion is the backdrop against which politics moves throughout the years. It is more difficult to wage a war against a country that shares your religion (it damages the stability of your realm), and provinces of a different religion have a tendency to rebel more frequently (depending in part on how tolerant you decide to be of other religions). You can send missionaries around the world to convert the infidels.

Trade and Colonization

The game has a fairly opaque concept of "centers of trade" where your merchants compete for goods that I couldn't quite wrap my head around or enjoy. Colonization is expensive and takes forever, which makes sense, since it did in real life. You'll fight hostile natives (if there are any), plague (the game accurately models the dictum "Don't try to colonize subsaharan Africa, you idiot"), and the other great and not-so-great powers.

Exploration is a bit...strange. Put simply, you can't just go off into terra incognita and discover the world; you need a special army: an explorer (for seas) or a conquistador (for land). You can't buy them, find them, or hire them. You get one when the game decides to give you one; Spain and Portugal get plenty, early on, and everyone else gets few to none. This is part of what I mean when I describe the game as a "history simulator." There will be no alternate histories here (in the main game, at least) where France discovers the New World.

Hail, Mecklemburg!

One of the major changes from EU to EU II was the addition of the ability to play any country, not just the great powers. Want to play as Japan in 1419? You can do it. Brandenburg, Mecklemburg, or Tuscany? You got it. Playing the game as a tiny power has a very different feel from playing as a great power (it's the difference between "Can I conquer the world?" and "Can I survive the next two years?")

One of the things that recommends the EU series of games is: they're dirt cheap. You can get the original Europa Universalis for $5 on the remainder shelves of most big PC software chains, and EU II is going for around $10 to $15. That was, in fact, what convinced me to take a risk on it, and if I view my investment as "I spent $15 to get a really cool map of the world in the year 1500", it seems sensible to me.

The Downsides

The user interface is terrible. Of course it's clunky and unintuitive: it's a strategy game put out by a European software house, ergonomics in software are against the law there. More than that, it has a disturbing mouse-feel. By that I mean that the mouse tracking feels sloppy and inadequate, like they somehow misused DirectX and didn't get it right. At first I thought this was specific to the Mac version of the game, but I've since played on the PC and it's the same thing. I can't quite put it into words, but the mouse just doesn't move right. That makes the fact that the UI elements themselves are all undersized and not well marked that much worse.

The game is slow. No, slower than that. Glacially slow. Not in terms of how fast your pieces move, but just in terms of pacing. Obviously, some people like that in this class of game. I'm not saying it's an out-and-out negative, but it's a strange thing to say to yourself "OK, well, that was a good round of conquest. Now I'll watch the days go by one at a time for three years until my reputation improves."

The biggest negative of the game, for me, is that it fails to coalesce into something more than a toy; at times it feels more like Europa is playing you, rather than vice-versa. What is the game looking for? What inputs can I give it to make it happy? I admit that that is a vague description, but it's how I feel: I have fun looking at the game. I have fun thinking about the game. But I don't have all that much fun actually playing the game. I think the detailed After Action Reports on the publisher's forums by avid players attest to this: what's fun in Europa Universalis is, in large part, the mirror it holds up to the history buff's psyche, rather than the mechanisms and machinations of the game itself.

Additional Resources

Here are some links you can follow while you're thinking about how cool maps are:

- Greg Costikyan loves the game

- The EU forums are full of many tips from fans and fanatics.

- You can get the game at many places, including Amazon

Why I don't buy a Silvia, or Ode to La Prima

Why I don't buy a Silvia, or Ode to La Prima

by psuHome espresso machines are a big business. For a few hundred dollars you can get a machine on par with the stuff that Starbucks is using to make those Venti half-caff double caramel 2-pump vanilla machiatto smoothie drinks for the local teenage set. But here is why I'd rather just go to La Prima espresso.

Really good coffee is a complicated thing.

My wife never drank, and in fact, hated coffee for at least the first 15 years we were together. This is because coffee in the U.S., for the most part, sucks. I believe this is for a few reasons:

1. The coffee is not fresh. Most places use a service that delivers packets of preground coffee that have been aging for months.

2. The coffee is boiled. Most places use drip coffee machines or worse, those industrial strength brewers that boil the coffee and then put it in a tank to keep at a super hot temperature for hours. By the time you see it, the coffee is long dead.

3. The Starbucks curse. Starbucks has made espresso and boiled fancy coffee almost universally available. Unfortunately, only the busiest Starbucks goes through enough coffee to have fresh grounds and people who know how to make a good shot. So most of the time you get tasteless crap with too much milk.

The first coffee my wife drank was a café au lait at Café de Flore in Paris. This is some of the best coffee I've ever had. It's basically a triple or quad shot of espresso in one small cup, and hot milk in another. You mix it into little mugs at the table. Let us compare:

1. The coffee is super fresh. This café sells so much coffee that they have to buy new stuff every day.

2. The people know how to make espresso. The espresso here is so good that it has a rich nutty flavor and little or no hint of bitterness. This is the result of perfect brewing. Every shot is done fresh and the grind and tamping are perfect for the machine they use. Getting stuff this wired in is the result of years of practice and expertise.

In Pittsburgh, we have a single coffee place that can reach this level of perfection on a regular basis: La Prima Espresso in the strip. Like the Paris coffee, the coffee is always fresh. Like the café in Paris, the people at La Prima have been making espresso for years and know just how to do it. In fact, when you go, you should always strive to have one of the veteran bar people make your shot for you because the ones of lesser experience will only disappoint.

Finally, La Prima does not commit the Starbucks Cappucino Sin. To wit, the La Prima Cappucino is not this mutant drink wherein a tiny shot of coffee sits at the bottom of a mile deep sea of foam. The drink is as it should be. Hot, slightly foamy, milk mixed in with a perfect espresso shot so that the foam from the coffee mixes with the steamed milk to form a perfect bond of milky rich, smooth, nutty, perfect coffee goodness.

For me, the La Prima cappucino is the closest thing to the apotheosis of coffee that I've had, short of that café au lait in Paris. It would take me years to come even close to doing something as perfect in my own home, with expensive equipment that is too loud and takes up too much space in my kitchen.

Therefore, as pretty as the Silvia is, I don't think I'll buy one. I'd just make bad coffee with it, and that would be tragic.

March 16, 2004

Platforms in Play

Platforms in Play

by peterbI play video games, on average, maybe an hour a day. Sometimes more, sometimes less, but on average probably 1/24th of my adult life is spent playing videogames. That's quite a lot.

I have a love of the game medium that is wide and deep. For the past many years, I've played games both on the PC (Windows and Mac) and more or less every console in vogue. I spend time and money on gaming as a hobby. And lately I notice that a greater percentage of my playing time is devoted to games on a console, as compared to games on the PC.

Why is that?

Although it's popular for people to blame cost, that's not really a major factor for me. I've got my PC. I've got my consoles. They're all paid for. I'm asking "What are the feelings that make me reach for the console rather than the PC when I'm in a gaming mood?"

For one thing, there's the comfort factor. I sit at a desk in front of a computer all day long. Perhaps somewhere in the back of my mind is the feeling that sitting at a desk in front of a computer in my leisure time too is wrong somehow -- playing a game is psychologically transformed into work. This holiday season, when I had a huge stack of games that I hadn't made progress in, I had a brief period where I was actually feeling guilty that I wasn't playing enough games. When games are work instead of fun, I'm less likely to play them.

I can play a console game from the couch, sitting well back from the monitor, which is an attractive, large TV. Friends can play or watch at the same time in the same room, which makes the console experience a bit more social, comparatively speaking. If I'm playing Project Gotham Racing 2, I can play standing up (everyone knows that if you lean when turning left, the car turns faster, right? Just like how you can steer the ball when bowling.)

Lastly, there's the "just works" factor. This weekend while preparing my review of The Battle for Wesnoth, I decided to fire up Warlords III to refresh my memory of it. Same hardware configuration as when I used to play it, same disc, but now, presumably because of some magic Windows update, the game no longer works -- it crashes after a few minutes. I spent about 2 hours downloading various driver updates and trying different configurations, but in the end I was foiled. Congratulations! This is what it's like to play games on the PC. Get used to it.

When I want to play a game on the PS2 or Xbox, I walk up to it, hit the power button, put the disc in the drive, and I'm playing in a minute or so. No fuss. No wondering if there will be some subtle incompatibility between the game and my sound card. It all just works.

Perhaps this is just another example of how specialization brings convenience. If you really want to, you can make toast by sticking a piece of bread on a spit and holding it above a flame, or by putting it in the oven for the right amount of time. Everyone has an oven. Everyone has a stove. But everyone also has a toaster. You don't hear people saying "Hey, don't use that toaster -- this Viking range is much more powerful!" Yet people make that argument about PC games versus console games all the time.

Chromatron

A year ago I would have said that a PC was the better choice for online gaming, but frankly the Xbox Live user experience has so far exceeded my expectations that I no longer hold that opinion. I've drunk the Kool-aid. Here's my money; a few bucks a month to pay for a voice-enabled rendezvous service that lets me play with my friends rather than a bunch of rude 13 year olds is well worth it.

Will I keep playing PC games? Sure, especially the smaller, independent ones. But the sharp dividing line of quality that used to exist between PC and console games no longer exists. As time goes on, I find that the ergonomic advantages of consoles overwhelm PC games for all games except those with the quirkiest user interfaces. I'm already choosing to play most game available for both PC and Xbox in their Xbox form.

Toast, you see, should be made in a toaster.

Additional Resources

This is what we talk about when we talk about games:

- Brad Wardell of Stardock explains why the writing is on the wall for PC gaming, and how developers are going to have to adapt.

- Silver Spaceship's Chromatron is one of the best little games to come along in a while. PC and Mac.

- One of the last great small game companies is Ambrosia.

- For those of you who wonder "What ever happened to Silas Warner?"

- My review of The Battle for Wesnoth.

March 14, 2004

Gjetost: Freakish Norwegian Caramel Cheese

Gjetost: Freakish Norwegian Caramel Cheese

by peterbI have a cheese problem.

My problem centers around the fact that the two best cheesemongers in town (Penn Mac and Whole Foods) are somewhat inconvenient for me to reach without planning. So I often find myself in the local supermarket, Giant Eagle, which purports to have a good selection of cheese. And they do: in the abstract, their selection is "ok." Nothing fabulous, but they often have cheeses that I would like to eat, especially if I haven't had time to pick up something great at Penn Mac.

However, for some reason that I don't fully comprehend, Giant Eagle wraps their cheese in a plastic that makes all of their cheese taste disgusting -- it has a penetrating, oily, plastic aroma that can manage to penetrate to the core of the stinkiest Stilton. Some middle-level manager probably had to go out and do hard research to locate a plastic that was this effective at completely ruining cheese. So I am caught in an infinite cycle wherein I am craving cheese, but I have no cheese, and I'm in the supermarket, and Giant Eagle has a type of cheese that I want, and I know that it will probably taste like rancid plastic but I still convince myself that somehow it won't taste bad this time, and I buy the cheese anyway, and I bring it home, and it tastes like plastic and I am sad and swear that I'll never do that again.

Since I don't seem to be able to break my habit of buying cheese at the supermarket when I'm craving it, I've developed a tactic designed to minimize the risk: only buy cheeses that were factory-wrapped, rather than cheeses cut and wrapped at the supermarket. These generally end up being not top-of-the-line cheeses, because of the way they are packaged and sold, but my logic is that it's less depressing to buy a cheap cheese that turns out to be not very good than it is to buy a superb stilton that tastes like plastic because of an incompetent cheesemonger's stinky packaging.

Following that tactic today led me to buy "gjetost," which I knew nothing about other than it is Norwegian and comes in a cute small square package.

Gjetost is...odd. I am not entirely convinced it is really cheese. I think it may actually be a very large piece of "Bit-o-honey" candy.

Gjetost

So I'm eating this candy cheese thinking "this can't possibly be right," so I turned to the internet, which confirmed that, yes, this is indeed what gjetost is supposed to be like. It's a cow's and goat's milk cheese which is prepared such that some of the lactose caramelizes. One site suggested that it's common in Norway to enjoy it with a cup of coffee for breakfast. I happened to have just brewed a pot of coffee so I tried it: it didn't improve the experience substantially.

People describe gjetost as a "love it or hate it" experience. I don't love it or hate it. But I might give out slices to trick or treaters next Halloween.

Additional Resources

Remember: a life without cheese is not worth living.

- Information about gjetost.

- How to make your own gjetost.

- Or, as obsessive gjetost fans have discovered, you can buy it over the internet.

- Gjetost is marketed in the US by Nestlè, Inc. as Bit o' Honey.

- The Pennsylvania Macaroni Company is the best place to buy cheese in Pittsburgh.

- I'd much rather be eating Ami du Chambertin.

March 13, 2004

Stupid Symlink Tricks

Stupid Symlink Tricks

by peterbI do all of my work on my laptop. I have an external drive for large projects, but the desire to keep everything on the laptop means that I really only want to spend internal hard drive space on the essentials.

I love LiveType, but I only use it once in a blue moon, generally when finishing a project up. Unfortunately, Apple's dopey installation program requires that LiveType (like all the Pro apps) be installed completely on the internal drive. Here's how to route around their bogosity.

All we're going to do is use the unix ability to have symbolic links from one directory to another allow us to store the most egregiously large directory on our external drive. Symbolic links are created with the ln command.

(I am assuming that your external drive is hooked up during this procedure. For instructional purposes, let's assume the drive is named external)

That's it! You're done. (You could of course just do sudo bash and then issue the mkdir, mv, and ln commands from a root shell, but that violates the principle of being conservative when wearing your root hat).

You can do this not only with LiveType, but with Soundtrack, and in fact even with iTunes, if you're willing to accept parts of your collection being unavailable when you're not tethered to your drive. Note that iTunes goes to greater lengths than most to disallow this technique (in particular, it will actually insist that your "iTunes Music" directory is on a local drive, but you can set up symlinks within that directory for specific artists. I have two little shell scripts to let me migrate data back and forth as I desire. Here's one:

...so from within my iTunes Music directory offline.sh "The Beatles" will migrate the entire Beatles directory to the external drive, and set up the correct symlinks so that I can still access it as needed. Of course, there's an online.sh to reverse the process.

One thing of note is that most of the HFS+ savvy applications hate symlinks and do unexpected things when asked to store data in a symlinked directory. So if after migrating data offline you try to add anything to those directories (for example, by ripping another Beatles album, say Let It Be in iTunes), it will end up sticking that in ~/Music/iTunes/iTunes Music/Let It Be rather than in ~/Music/iTunes/iTunes Music/Beatles/Let It Be. It still works, it's just unpleasant. This means that you should always be prepared to undo your symlinks before installing software updates, for example, lest you confuse the poor lost little Finder.

I hope this little trick helps you, and be sure to make backups of any unrecoverable data before trying it, lest a careless slip of the fingers (or a mistake on my part!) cause data loss.

March 12, 2004

Para Los Muertos

Para Los Muertos

by peterb Recordaremos.

Recordaremos.

Our thoughts and hopes are with you, Madrileños.

You are not alone.

Review: The Battle for Wesnoth

Review: The Battle for Wesnoth

by peterbOver the years, one of my favorite types of computer games has fallen under the rubric of "turn-based strategy"; basically, traditional units-in-hexes wargames where the computer takes care of all the bookkeeping for me. The first of these was probably, I'm guessing, Empire for the VAX/VMS system (and yes, I played it).

Empire had supply points (cities) which could be captured, that could produce a variety of units with different capabilities (land, sea, air) and different attack and defense values. That's the core of this sort of game. There have been hundreds of implementations of these throughout the years. In my college years, I enjoyed XConq, which was a very thorough (though baroque) implementation. One feature of this sort of game is that the theme is more or less completely disconnected from the mechanics. Draw pictures of artillery pieces and tanks on the chits, and it's a World War II game. Change the artillery pieces into men in funny hats and the tanks into trolls, and it's a "fantasy" game. Change the trolls into cavalry and now you're in the Napoleonic era. So one core game mechanic can be used for many different vibes.For my money, the best in class of these games was the Warlords series. The original Warlords, which had both Mac and DOS versions, took place on a large continent named Etheria. 8 kingdoms each started with a single castle, a hero, and an army. Castles generated income, which could be used to fund more armies (and to support them; you had to pay upkeep for your existing troops). The goal was simple: take over the world. I liked Warlords better than the alternatives because the designer made some smart simplifying assumptions about unit management and generation. Often in games of this class the early game is fascinating and then by the late midgame you are staggering under the logistical weight of managing several hundred units, and suddenly it's not fun anymore. Warlords avoids that syndrome.

The series reached its apogee of playability in the superb "Warlords II Deluxe." This added the concept of experience for heroes, more customization of what types of troops towns could product, the ability to 'vector' troops from where they were marshaled to a city where they should be deployed, and the idea of searching ruins for magical treasures which made the hero a better commander (this turns out to be less annoying than it sounds: rather than turning the game into a hunt for loot, the ruins were generally distant enough that setting out for one meant taking a serious strategic risk.) Warlords II Deluxe combined fast, intuitive play, a good GUI, a wonderful set of included campaigns and battles, a great map editor that spawned an incredibly prolific fan community, and -- unfortunately -- was written such that if you are running any OS more recent than Windows 95 (or, on the other side of the world, MacOS 9) you have little hope of actually playing it anymore. Warlords II Deluxe is one of the games I've considered buying an ancient PC just to be able to continue playing.Steve Fawkner, the author of Warlords, has continued to turn out the sequels. Each sequel has, to me, played progressively worse. I hate to say that, because he has always been kind and courteous to me, and I know that there are countless improvements under the hood. Steve is an AI geek. He'll talk about how the pathing in Warlords III blows away the WL II version, and how much more challenging the opponent is, yadda yadda yadda, but all I know is that it took me 15 seconds to play a simple turn of Warlords II Deluxe, and it takes 5 minutes to play a simple turn of Warlords III, 4 minutes and 46 seconds of which is spent watching the lovingly rendered animation of an orc move deliberately across the screen, pausing to make himself a hot toddy. You can actually watch the molasses drip from the jar into the teacup. It's very detailed. And oh so very slow.

Warlords IV: Heroes of Etheria likewise continues the trend towards more bigger slower. If you don't believe me, download the demo and try it for yourself.

So: newer doesn't always mean better. Prettier graphics doesn't always mean better. I would argue that some games can be made better by giving the player a view of his units that has less graphic detail, not more. Graphic designers that work in the real world understand this. Consider the icons used by the Olympics to denote sports. Surely, we could probably replace those with 3d holographic movies of people actually participating in those sports. And it would probably result in everyone having to take a few more seconds to think about what they were looking at rather than immediately mapping the simple icon to "Oh, that's skiing".

So: newer doesn't always mean better. Prettier graphics doesn't always mean better. I would argue that some games can be made better by giving the player a view of his units that has less graphic detail, not more. Graphic designers that work in the real world understand this. Consider the icons used by the Olympics to denote sports. Surely, we could probably replace those with 3d holographic movies of people actually participating in those sports. And it would probably result in everyone having to take a few more seconds to think about what they were looking at rather than immediately mapping the simple icon to "Oh, that's skiing".

Atari Football

I feel bad slamming the newer Warlords games in this way, because I'm sure it's not as if Steve walked in to the office one day and said "Hey, I've got this great idea: let's degrade the user experience somewhat and make the game slower." I have no doubt that he shopped an incremental improvement to Warlords and got told by a publisher "If it doesn't look as good as this other game over here, we won't be able to get it on the shelves, so therefore we won't publish it for you, so therefore no more Warlords sequels." I've been in that position myself, albeit not with games. If you're not a sales and marketing expert and the marketing guy says "Your product must have feature X or no one will buy it," you either decide that you know more about marketing than he does and ignore him, and then you go out of business and starve to death and people laugh at you, or you shrug, sigh, and go back to your office and add the feature the marketing guy asked for, even if you personally think it's sort of stupid.

Thinking about it a little more, I'm not really saying "I wish Warlords III (or IV) had worse graphics." I'm actually making a complaint about the user interface. I'm saying "In the old version, I could accomplish this task in X seconds, and in the new version that same task takes four times as long." (Please note that I haven't gone and actually measured the times. I'm speaking of impressions here, but I think that that's valid: half of UI design is about managing user perception.) Perhaps if I hadn't used the older, more responsive UI I wouldn't care. Maybe I'm not Steve's market any more. Maybe I'm more persnickety about response time than other old Warlords fans. But fundamentally, this is the same complaint people make about Windows XP taskbar animations, or the 'genie' effect to and from the dock on Mac OS X.

So the reason this all matters is that I'd rather spend time playing the (open source!) game The Battle of Wesnoth than buy Warlords IV because it has a better response time, even though I am 100% positive that Warlords has better graphics, better sound, better production values, fewer misspellings, and superior computer AI. That's how much the response time matters to me. I'm playing a strategy game. Strategy games are like chess. How often would you play a chess game if every time you told the computer to move a piece you had to watch it move for 15 seconds? Another way of saying this is: I have more confidence that there will be patches to Wesnoth to improve enemy AI than I am that I'll ever be able to make Warlords III's UI more responsive.

The Battle of Wesnoth gets the response time issues almost exactly right, especially when you turn on the "fast animations" option. It's a clever game with some aspects to tactical combat resolution that I haven't seen in a game of this particular type before, that are worth mentioning. Each unit may have multiple types of attacks (for example, perhaps your bowmen also carry a dagger which is not as strong as their bow). When you attack, you have the option of choosing the type of attack (fire your bows, or stab the enemy with your dagger?) The enemy must respond with an attack of the same kind. If you have bows and the enemy is a thug with a club and no ranged attack, you can attack with impunity. This introduces a rock-scissors-paper aspect to the combat that is pleasing without being trivial to resolve.Thinking about it a bit more, I now realize that I have seen this before, almost -- it's very similar to the "hard attack" / "soft attack" handling in Panzer General. This is actually another way in which Wesnoth differs from Warlords. In Warlords the natural unit of control is the city, which provides a defensive bonus for units within it and generates income and potentially builds new units. Unlike Warlords, units in Wesnoth exert a (panzer-general-like) zone of control (as in PG and other games), and so you can actually use your forces to shape the battlefield, rather than simply attacking or defending with them.

The scenarios were designed with care. The tutorial was a bit disappointing, and somewhat disorganized, and the unit recruitment rules are not well explained at first (you quickly figure it out once you move your hero out into the fray and then discover that you can't hire new units if you're not in your keep.) The first real scenario made things much more interesting, however, and the difficulty ramp is appropriately challenging without being impossibly steep. I liked that scenarios support victory objectives other than "kill everyone else" -- the first two scenarios in the first campaign, for example, are "reach this spot on the map without these two VIPs dying" and "kill foozle OR survive for 12 turns", respectively.

The game is still in beta, and there are some areas that need work. For example, while different terrain types exist and are implemented, their effect on the game is fairly opaque; the impact on movement is fairly straightforward, but it's clear that it also impacts combat, but not how. When you decide what attack to use, the game tells you the percent chance success you'll have, so you end up having to kind of intuit the effect terrain is having by comparing those percentages across several battles. Personally, I'd rather see something like "+10% bonus due to being in the woods, +10% bonus for bravery" and not know my absolute chance of success than the inverse. Better yet, give me both. Also, while I've already said I like the responsiveness of the game overall, there are definitely GUI nits; right-clicking is supposed to bring up a context menu, but this doesn't work on a Mac running OS X. There are too many spelling and grammar errors; hopefully they'll fix those soon (I know, I know, it's an open source project: I should volunteer).

The music is nice and understated. I enjoy being soothed while slaying the armies of darkness.

The Battle for Wesnoth is one of the most polished games I've seen released under the GPL thus far. I approve of its SDL/multi-platform nature, and the fact that it's clearly made by people that understand and love the genre. I look forward to seeing it develop more, and I hope the team stays together and works on other projects as well. Perhaps the answer to the unceasing but understandable pressure from commerical game publishers for sequels that focus on flash and not simply on improving the user experience is for small projects to demonstrate that fun does not require seed funding from Electronic Arts to exist.

Personal to Steve Fawkner: I can't promise you the sort of mad cash you get for making the top shelf at Best Buy, so I realize that I can't make a business case to you as well as your publisher can. But here's an offer: Your newest game, Warlords IV: Heroes of Etheria retails for $19.99 (at least at Amazon), and you just released Warlords II Deluxe for the PocketPC. If you update Warlords II Deluxe to work on modern Win32 systems -- most of which I bet you in fact had to do to release the PocketPC edition -- I'll pay you $19.99 for that, making it the third time I will have bought the game. I bet there's a few other people out there that would do the same, although, honestly, probably not more than a few hundred. But, y'know. While we may not be a market, we are dedicated fans.

Don't run it by your marketing team. This can be our little secret.

Additional Resources

Some of these links may be of use to those of you who like turn-based strategy games.

- The Battle for Wesnoth is available for download.

- The Warlords series is what everyone else in the consumer space imitated.

- Xconq wasn't the first of this type of game, but it's the oldest ancestor still being actively developed.

- Arguably, the Panzer General games were a better implementation of the same basic gameplay mechanic with a different mise en scene (memo to self: try Fantasy General some time) (additional memo to self: Now stop thinking of General John Shalikashvili in a silk teddy. Ow ow ow ow ow.).

- Heroes of Might and Magic is another series of games in the same class that got worse with each successive iteration after the second. Milk that cash cow, boys! Branding! It's all about branding!

March 09, 2004

Formula 1: It's the Coverage, Stupid!

Formula 1: It's the Coverage, Stupid!

by peterbWell, the Australian Grand Prix is over, and once again I have to face ridicule from people like Dushyanth, who ask:

"Why do you watch this "sport"? All they do is go round and round in circles, and in the end Schumacher wins."As time goes by, I have fewer and fewer answers to that question. But instead of talking about Formula 1 as a sport, let's discuss it as a media event.

Supermodel and Troll

Passes were ignored. Cars would burst dynamically through a camera's field view, about to make a spectacular overtake, and the camera would remain still, pointing at the bereft, empty stretch of road behind the pass. Occasionally, a camera would accidentally be about to catch a pass in progress, at which point the program director would cut to a shot of the pit crew, filing their nails.